Designing for Confidence,

Not Completion

How I built Parleva, an AI conversation app that helps language learners start speaking in under a minute.

Language learners often freeze in real conversations despite completing lessons, streaks, and drills.

A conversation-first AI practice system that removes placement tests, adapts live, and extends realistic scenarios beyond scripted exchanges.

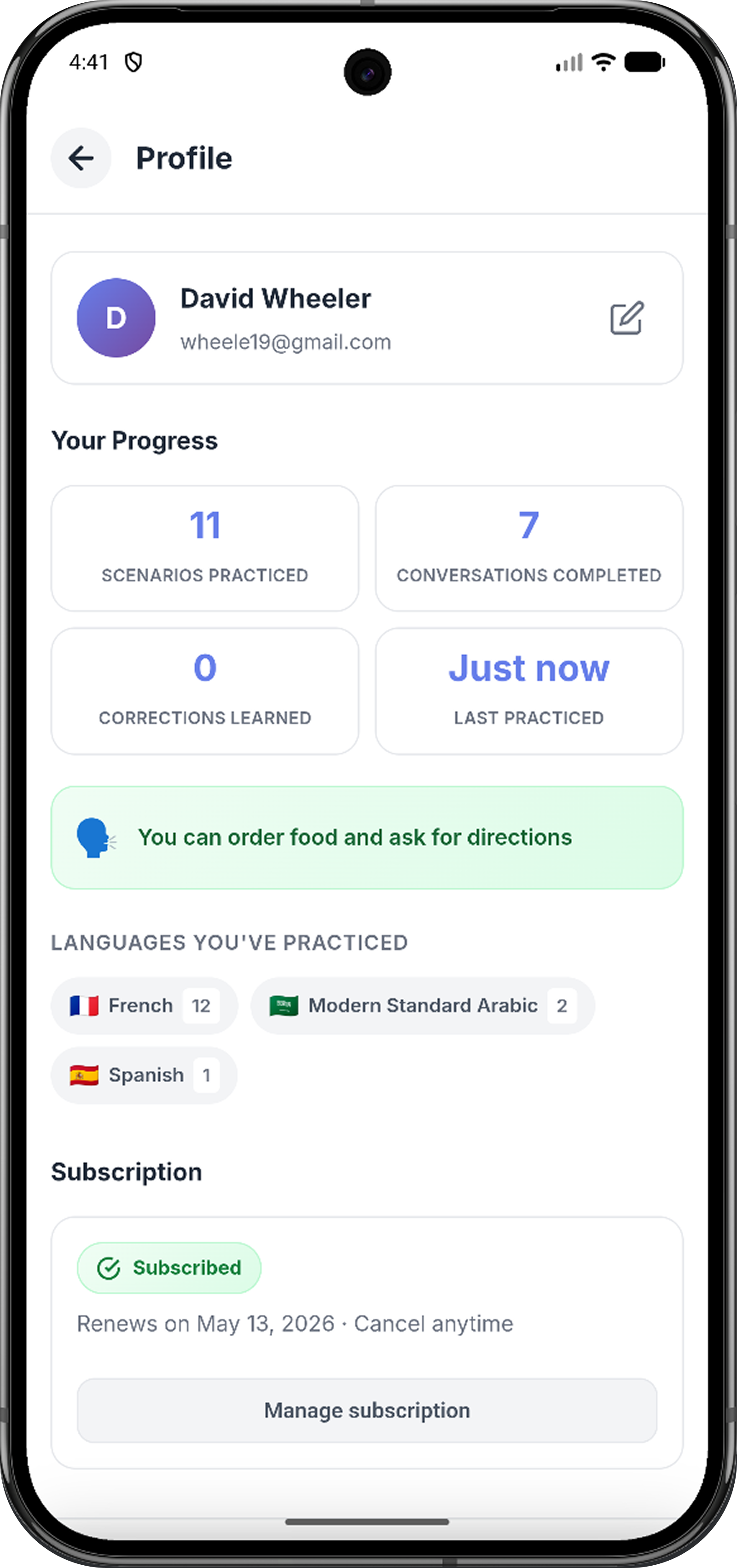

80% activation, 34-second median time to first conversation, 83% started within 2 minutes, 204 users returned for 2+ sessions.

The strongest signal was not activation alone. It was conversation depth.

At a glance

I led Parleva end-to-end as a solo product designer and builder — owning the full product loop from strategy and brand through design, AI behavior, implementation, analytics, and launch. Because I owned both design and implementation, every decision had to work beyond the screen, across product strategy, engineering feasibility, AI behavior, measurement, and growth.

The data told a clear story

Fast start → meaningful conversation depth → repeat behavior.

Most language apps measure what is easy to measure

Lessons completed. Streaks maintained. XP earned. Words recognized. Those signals can create the appearance of progress — but they do not always translate to the moment learners care about most:

Can I say something to a real person without freezing?

Most learners are not starting from zero. They may recognize words, pass quizzes, and complete modules. But when a real conversation becomes unpredictable, confidence disappears. The gap is not just knowledge. It is exposure.

Traditional language apps often treat conversation as something learners unlock after enough preparation. Parleva starts from a different belief:

If the goal is speaking, conversation should not be the reward at the end of learning. It should be the method.

- Lessons

- Quizzes

- Streaks

- Delayed speaking

- Completion

- Progress as points

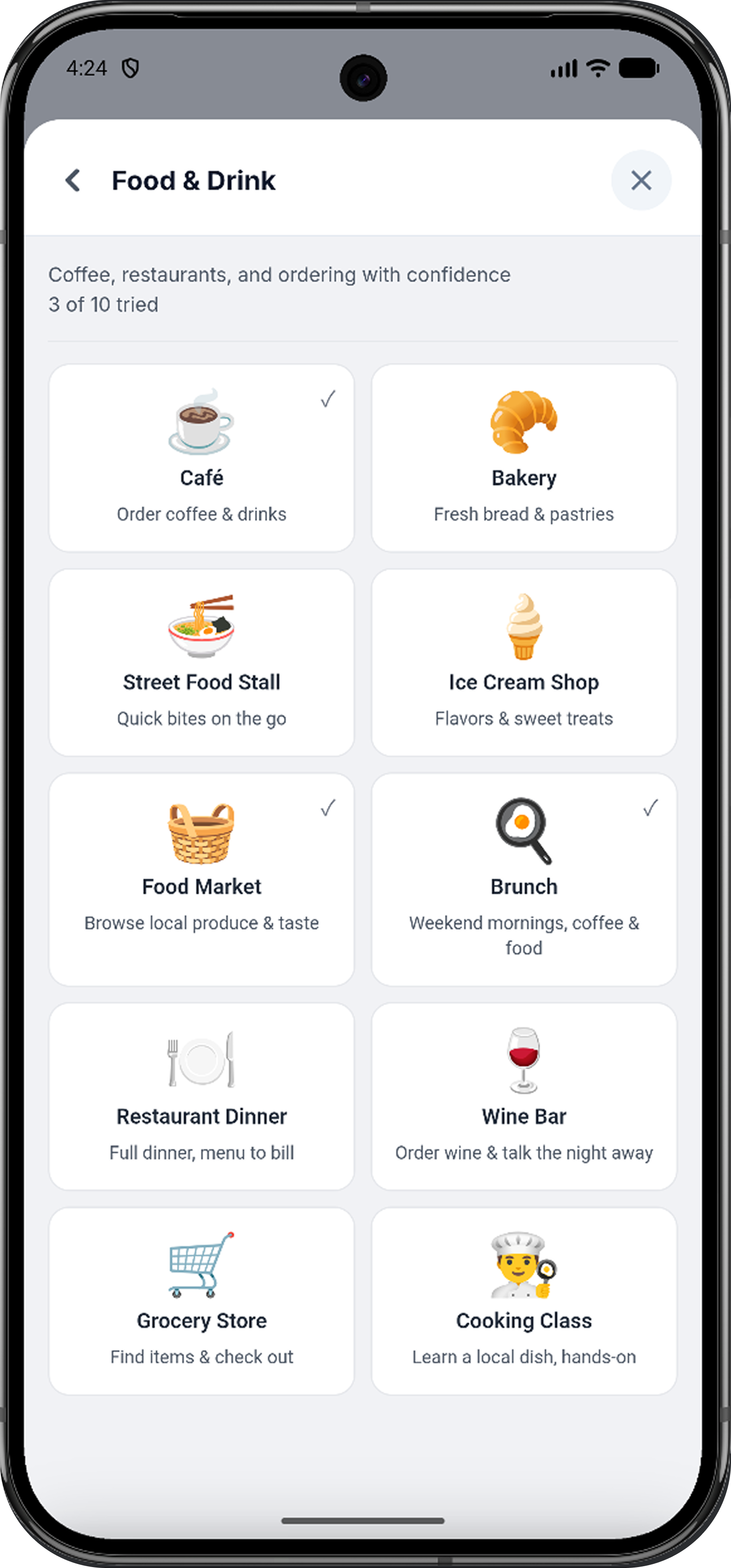

- Scenarios

- Realistic conversation

- Calm consistency

- Immediate speaking

- Confidence

- Progress as capability

Confidence is built through exposure, not completion

The core insight behind Parleva was that speaking confidence is experiential. It comes from staying in the moment long enough to realize you can handle it — even imperfectly.

"Confidence is built through exposure, not completion."

That meant the product could not feel like a lesson with a chat feature attached. It had to feel like a safe practice space where learners could enter a realistic scenario, stumble, recover, continue, and gradually feel less afraid of the next exchange.

Before designing screens, I defined the foundation

The brand was built around a clear positioning idea: Practice real conversations. Build real confidence. That positioning shaped the product experience as much as the marketing.

- "Complete your lesson"

- "Keep your streak"

- "You missed 3 days"

- "Don't fall behind"

- "Start a conversation"

- "Keep going"

- "You're getting more comfortable"

- "Try saying it this way"

Three product questions shaped the architecture

How do we get users into conversation before anxiety or friction builds?

How do we keep conversations realistic without overwhelming learners?

How do we motivate repeat practice without streak pressure or gamified guilt?

Three systems working together

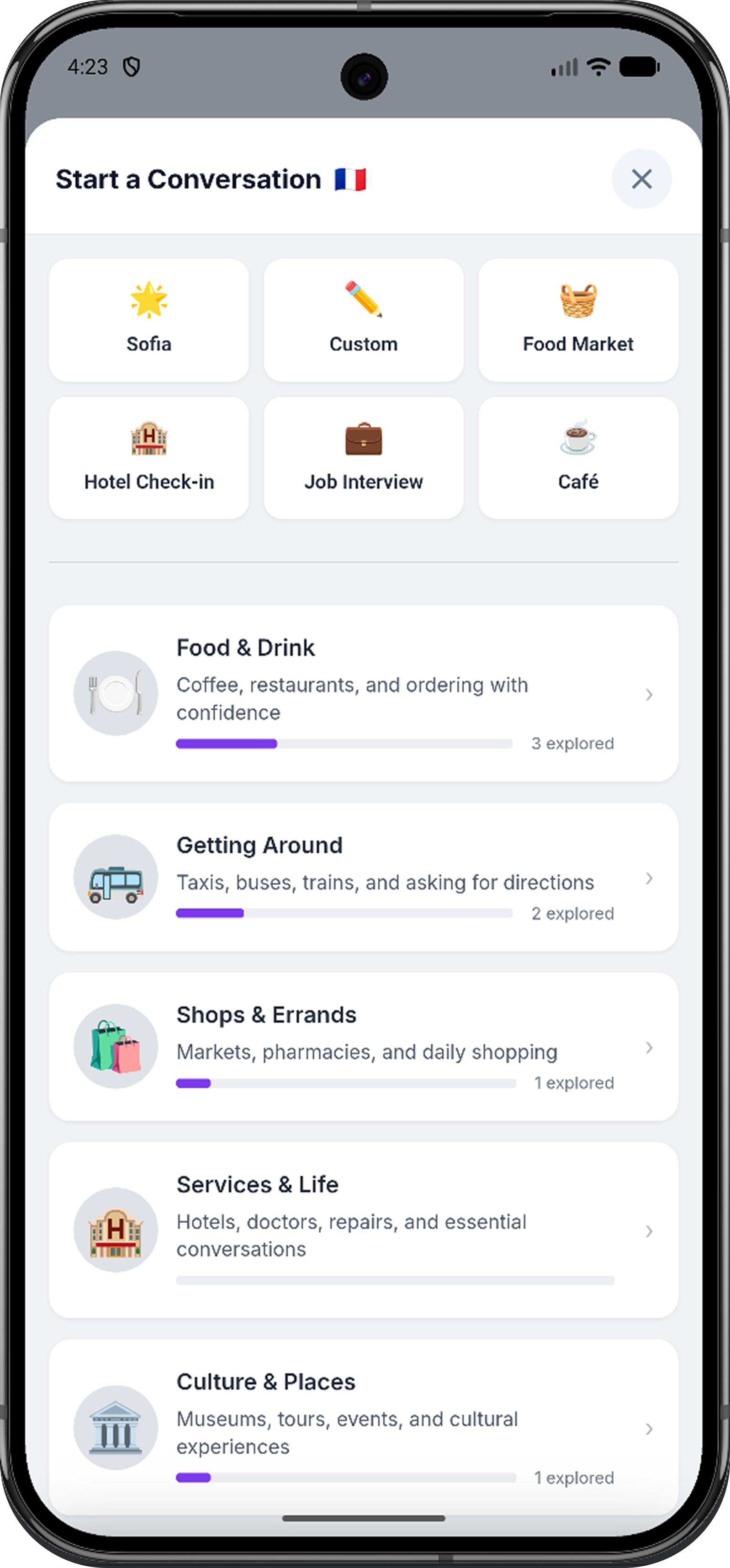

Parleva is not a language course with a conversation mode. It is a conversation-first practice product. A learner chooses a scenario and starts talking. The AI plays the role, adapts to the learner's ability, and provides focused coaching — no placement test, no XP, no lesson map required.

1 — Conversation Engine

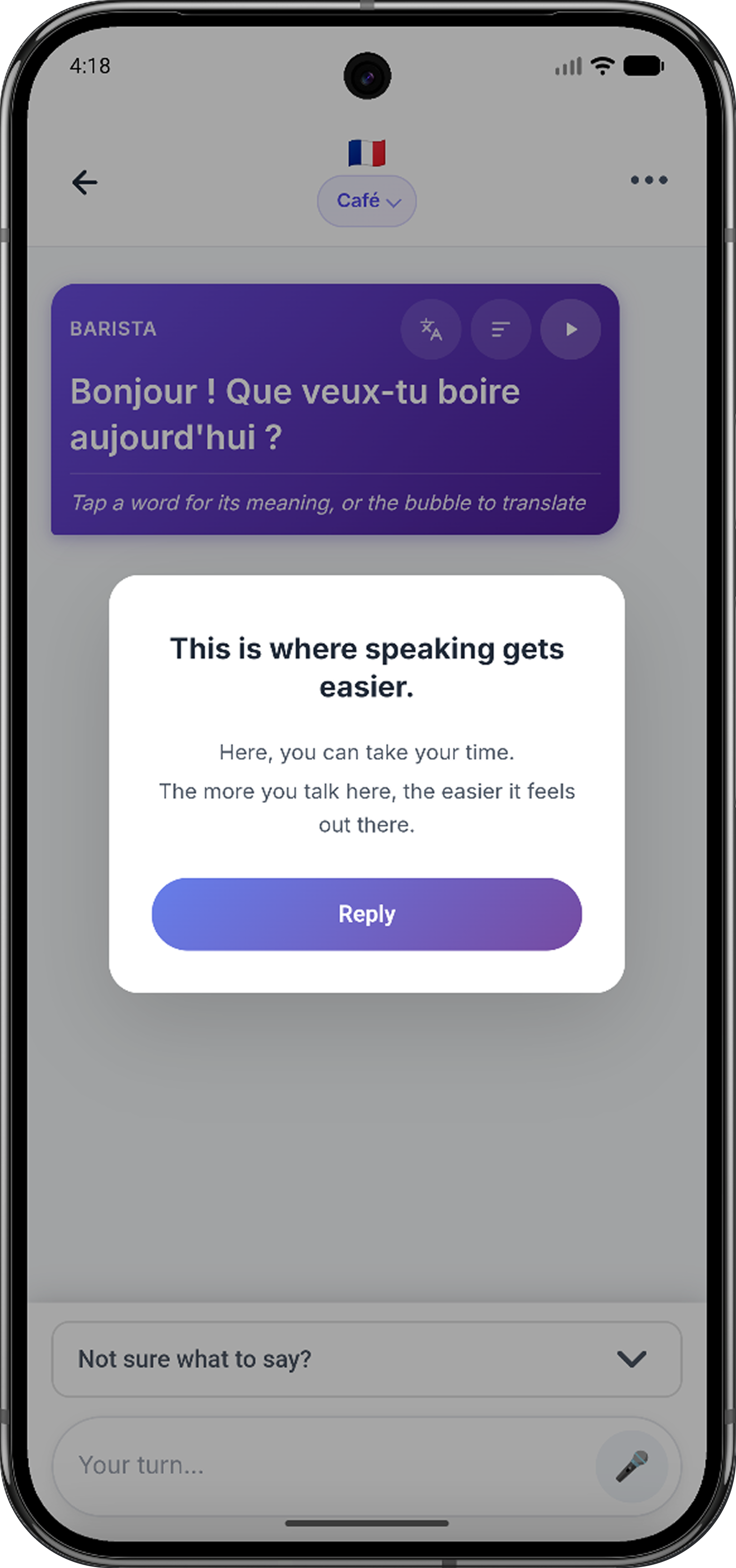

Most language practice tools close the scene as soon as the task resolves. Parleva doesn't. The barista follows up. The stranger asks where you are from. The hotel receptionist adds a small complication. Each scenario is designed with conversational hooks that create realistic follow-ups, light complications, and opportunities to recover. The goal is productive discomfort — the kind that helps learners practice the part of conversation most apps avoid.

| Step | Purpose |

|---|---|

| User message | Gets the learner actively producing language |

| AI responds in role | Preserves realism and scenario immersion |

| One coaching signal | Helps without overwhelming |

| Suggested replies | Prevents the blank-page moment |

| Next turn | Keeps the learner in conversation |

2 — Adaptive Intelligence

Parleva has no placement test. Placement tests create friction before value and can reinforce the anxiety the app is trying to reduce. Instead, Parleva calibrates through use. Every conversation generates a live signal based on the learner's actual output: message length, correctness, and language choice. The learner never has to declare their ability. They just start talking, and the product meets them where they are.

Calibration happens through use, not a placement test.

3 — Motivation Model

Parleva has no XP, streak pressure, leaderboards, or "you missed a day" notifications. Speaking requires vulnerability. You have to be willing to sound imperfect, pause, get something wrong, and try again. The product needs to protect that psychological safety, not add pressure on top of it.

- XP

- Streaks

- Loss aversion

- Leaderboards

- Pressure to return

- Real-world practice

- Calm consistency

- Intrinsic confidence

- Personal capability

- Reason to return

Parleva does not ask: How do we make users feel bad for leaving?

It asks: How do we make each conversation feel worth coming back to?

Five decisions that shaped the experience

No placement test

Calibration happens invisibly through conversation. Users reach value before being asked to define themselves.

Conversation-first onboarding

No tutorial. Users pick a scenario and begin. The product teaches itself through use — before hesitation builds.

Minimal interface

The conversation is the interface. Feedback and support appear only when useful — not as a permanent dashboard.

One piece of feedback at a time

Either praise or correction — not both. One signal keeps the rhythm conversational and the learner moving forward.

Suggestions as confidence scaffolding

Suggested replies at safe, natural, and stretch levels prevent the blank-page moment where anxiety takes over.

Three moments that define the experience

First-time experience

Within a median of 34 seconds, the first conversation is underway. The first experience is not preparation — it is the product.

Conversation loop

The objective is not to complete a module. The objective is to stay in conversation.

Returning user

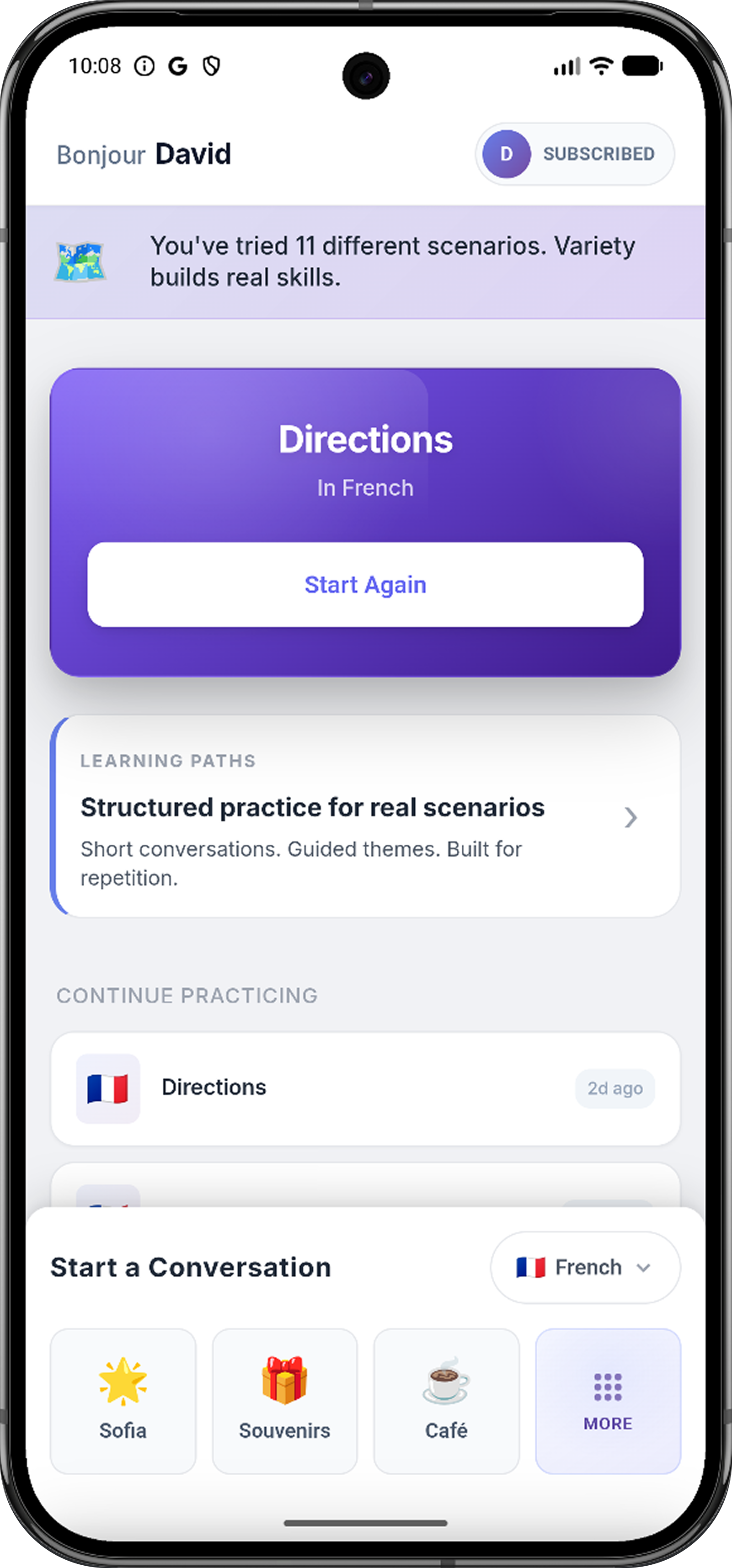

Returning users are not greeted with guilt, streak loss, or a dashboard of missed activity. They return to scenarios and start again.

Early data showed the core experience was working

Removing the gates and getting learners into the behavior quickly was validated. For a product built around speaking confidence, the first meaningful moment is the first exchange. Parleva compressed that gap to under a minute.

I used 10+ turns as a proxy for meaningful conversation depth — it indicated the learner moved beyond testing the app and stayed long enough to adapt, recover, and continue. 232 sessions reaching that threshold proved the behavior happened repeatedly, not just once.

The most important signal was not any single metric in isolation. It was the relationship between depth and return behavior. 204 users returned for multiple sessions — active users averaged 2.2 sessions — suggesting that users who entered the experience often explored beyond a single interaction. Speed helped them start, but depth helped them believe the experience was worth repeating.

Users talked about confidence, not features

The strongest qualitative signals did not praise features. They described a shift in identity.

"It helped me to have a voice."

This was the clearest validation of the product's emotional purpose. The user was not describing a UI pattern or a technical capability. They were describing a change in how they related to themselves as a speaker.

"There is no better way to learn language than through real conversation."

"Ideal for learners who want real conversational practice."

Senior-level judgment requires acknowledging what you gave up

No gamification vs. retention pressure

Removing streaks and XP removes one of the most reliable engagement mechanics in consumer apps. Parleva is betting that confidence-driven engagement is more aligned with its purpose than guilt-driven return behavior. That means the core conversation loop has to stand on its own.

Invisible intelligence vs. perceived simplicity

The adaptive level system does meaningful work in the background, but invisible systems can be hard for users to appreciate. If the AI adapts well, the experience simply feels natural. The design direction is to surface adaptation through subtle moments of recognition, not scores or dashboards.

Open conversation vs. structured learning

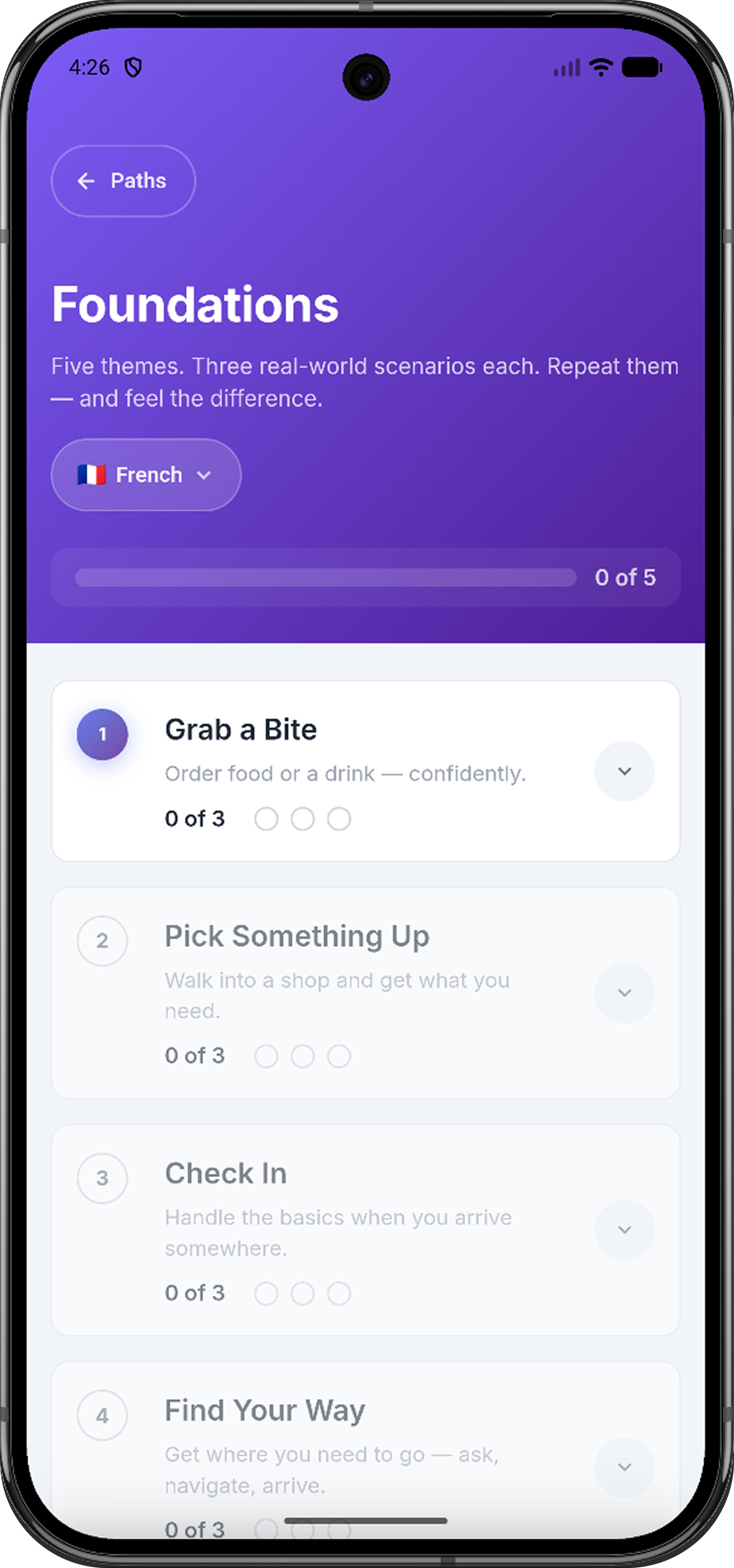

Parleva's open-ended model gives learners freedom, but some users still want direction. Too much structure would make Parleva feel like the apps it was designed to move beyond. The roadmap addresses this through lightweight learning paths that support conversation without replacing it.

AI flexibility vs. product consistency

LLMs are powerful because they can generate natural conversation. They are risky for the same reason. Parleva needed more than a prompt — it needed a behavior system defining rules for role consistency, correction boundaries, suggestion logic, and failure handling. The AI had to feel natural, but the product behavior had to remain designed.

Activation is not the same as confidence

Getting users into a conversation quickly matters. The 34-second median time to first conversation proved that onboarding was working. But the deeper challenge was helping users stay in the conversation long enough for the experience to become meaningful.

The strongest signal came from conversation depth. Once a session crossed into 10+ turns, it started to resemble what Parleva was designed for: not a lesson, but a real exchange.

And more: How do we help more people stay long enough for the product to work?

That shifted the next iteration toward stronger scenario hooks, clearer suggestions, more natural voice pacing, better continuity, and post-session reflection.

If I were starting again, I would instrument conversation quality earlier — not just whether users started, but where they stalled, which prompts created momentum, and which scenarios helped beginners recover fastest.

AI was leverage, not authorship

How AI was used in this project

Claude Code and ChatGPT helped accelerate exploration, implementation support, prompt iteration, and edge-case testing. But the important design work was not "using AI."

It was directing it. That meant defining behavior rules, testing failure modes, evaluating output quality, tightening conversation patterns, and deciding what the product should and should not do.

The product judgment still had to come from me.

Helping more users reach meaningful conversation depth

Memory and Continuity

Remember what a user practiced and where they struggled — making each return feel more personal, not more complex.

Progress That Feels Human

Reflect real-world capability — scenarios practiced, conversations completed, topics ready to revisit — not XP or abstract levels.

Deeper Session Insight

A calm post-session synthesis: what went well, what stretched the learner, one thing to carry forward. A reflection, not a report card.

Parleva began with a simple belief. Early data showed that users could reach conversation quickly. The deeper signal showed that meaningful conversation depth was the path to return behavior. And the strongest user feedback pointed to something more important than engagement: a shift in identity.

Every design decision from here is a path back to that moment.